Whenever these kinds of studies are made public, though, there are concerns about telling malicious deepfake makers how their videos have been shown to be false, allowing them to modify their work to be undetectable in the future.Ĭiftci is not too worried about that, however: “It’s not going to be easy for someone who doesn’t know much about the science behind it. Since the FakeCatcher findings were published, 27 researchers around the world have been using the algorithm and the dataset in their own analyses. We learn the tricks and even use some of them in our own data creation.”

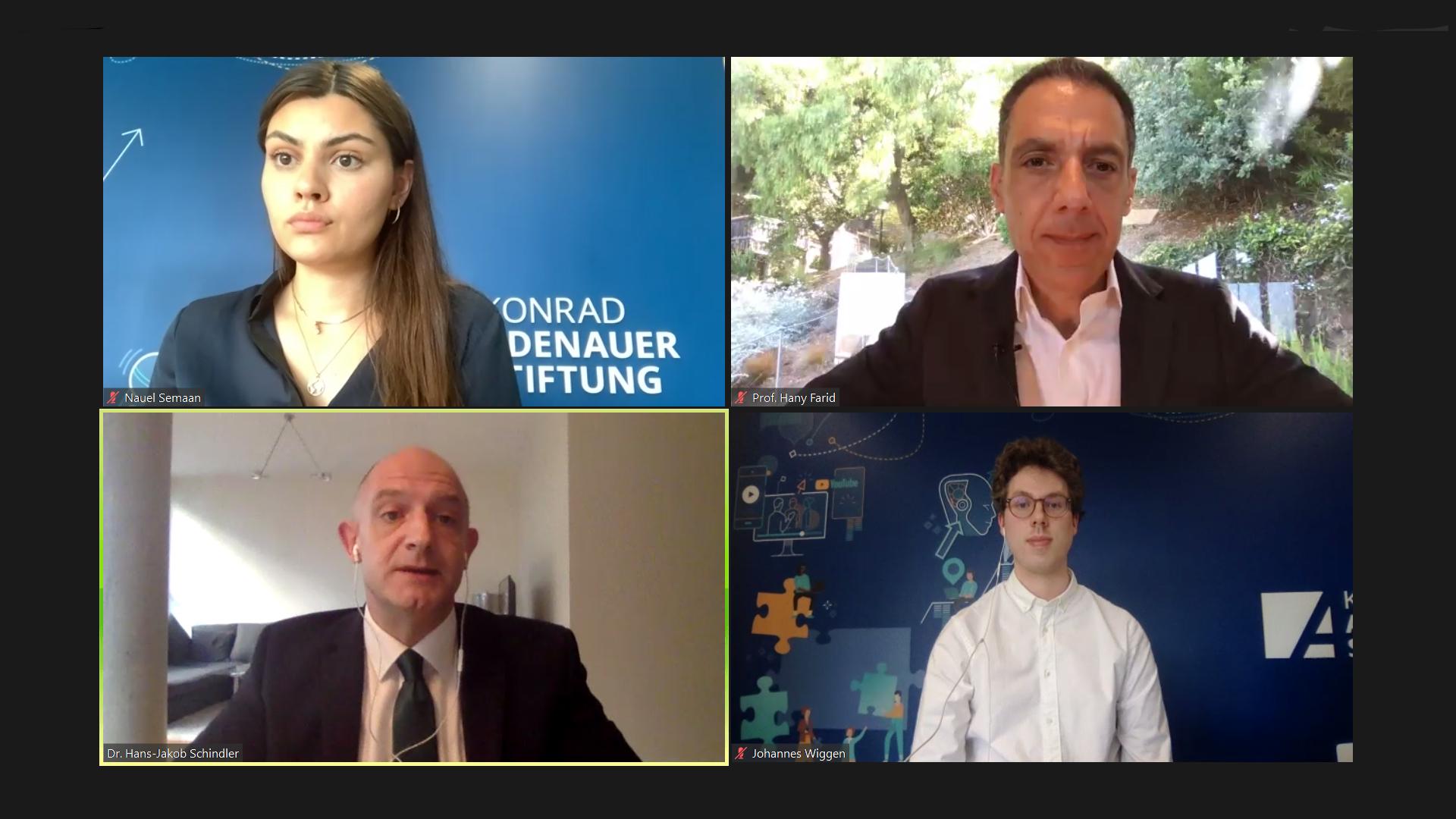

You understand how these deepfakes are being done. “It’s like the police knowing what all the criminals do and how they do it. It’s not that different if you think about it that way. “The big difference is that we scan real people and use it, while deepfakes take data from other people and use it. “Considering that we work with 3D using our own capture setup, we generate some of our own composites, which are basically ‘fake’ videos,” Ciftci said. Image Credit: Jonathan Cohen.ĭeepfakes found “in the wild” are many steps below the kind of quality that Yin’s lab generates, but it means that manipulated videos can be much easier to spot.

Ciftci’s doctoral thesis will focus on detecting “deepfake” videos. Umur Ciftci, a PhD student in computer science, poses for a 3D scan in Professor Lijun Yin’s lab at the Innovative Technologies Complex. The idea of using the physiology as another signature to see if it is consistent with previous data is very helpful for detection.” “We capture data not just with 2D and 3D visible images but also thermal cameras and physiology sensors. “Umur has done a lot of physiology data analysis, and signal processing research started with our first multimodal database,” Yin said. So much data is acquired in a 30-minute session that it requires 12 hours of computer processing to render it. A device also is strapped around a subject’s chest that monitors breathing and heart rate. Hollywood filmmakers, video game creators and others have utilized the databases for their creative projects.Īt Yin’s lab in the Innovative Technologies Complex, Ciftci has helped to build what may be the most advanced physiological capture setup in the United States, with its 18 cameras as well as in infrared. It builds on Yin’s 15 years of work creating multiple 3D databases of human faces and emotional expressions. Ciftci, a PhD student at Watson College’s Department of Computer Science, under Professor Lijun Yin’s supervision at the Graphics and Image Computing Laboratory, part of the Seymour Kunis Media Core funded by donor Gary Kunis ’73, LHD ’02. Working with Demir on the project is Umur A. For real videos, the blood flow in someone’s left cheek and right cheek - to oversimplify it - agree that they have the same pulse.” “In deepfakes, there is no consistency for heartbeats and there is no pulse information.

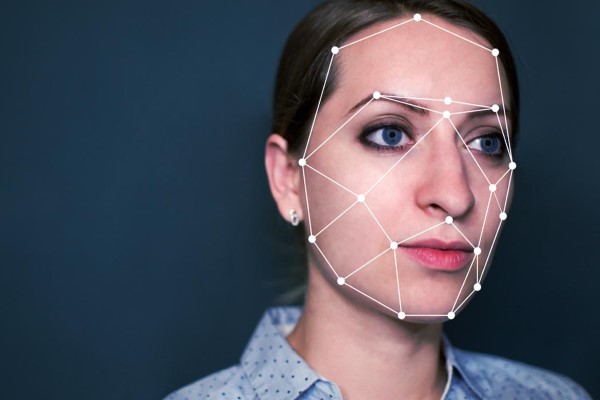

“We extract several PPG signals from different parts of the face and look at the spatial and temporal consistency of those signals,” said Ilke Demir, a senior research scientist at Intel. Photoplethysmography (abbreviated as PPG) is the same technique used for a pulse oximeter put on the tip of your finger at a doctor’s office, as well as Apple Watches and wearable fitness tracking devices that measure your heartbeat during exercise. FakeCatcher works by analyzing the subtle differences in skin color caused by the human heartbeat.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed